个人简介

Brief介绍

于2019年进入宁波大学计算机科学与技术专业开始接触CS,喜欢Coding,有Geek精神,喜欢接触前沿技术,爱折腾。

获得校级,省级,国家级和世界级竞赛奖项累计15+项(以学科竞赛和数据科学竞赛为主)。

科研经历丰富;完成论文4篇,专利一项。

项目经历丰富:承接校内外的大小外包项目(包括华为众智项目等)

学习campus经历

四川省崇庆中学 (2016.9-2019.7)高中

学习ing 高考冲冲冲 理科直男

- 我的高中自定义标签

- 文艺青年

- 玻璃心

- 技术狂

- 小镇做题家

宁波大学 (2019.9-2023.7)本科

MISL 数字域对抗样本攻防

- 我的大学自定义标签

- 行动派

- 腹黑

- 说走就走

- 摆烂人

浙江大学 (2023.9-future)直博

NESA Lab 分布式机器学习

- unknown status

- 去试试才知道

- 冲冲冲

科研

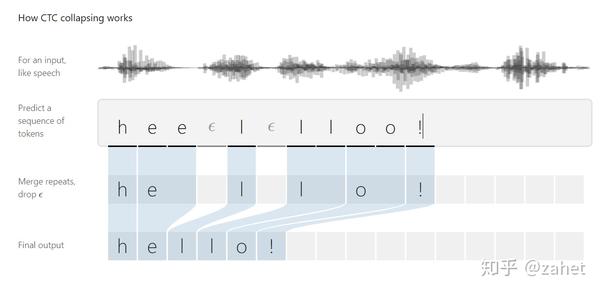

Automatic speech recognition(ASR)systems convert input speech into corresponding text and their performances have been significantly improved with the development of deep learning techniques.However, by adding small and human-imperceptible perturbations to the original speech,the generated adversarial example can make ASR systems produce unpredictable and even aggressive result.Till now,the adversarial example attack has posed many security risks to deep learning-based ASR systems.In this paper,a systematical survey on the adversarialexample attacks and countermeasures has beenprovided.First,we summarize the basic principles of the ASR systems based on deep learning.Secondly,several major methods of adversarial example generation for ASR systems are introduced.Correspondingly,the typical countermeasures of adversarial examplesarealso given.Finally,the challenges posed by adversarial samples are discussed,withseveral valuable research directions proposed on how to make the generated attacks more realistic,and enhance the robustness of ASR.

This paper proposes an approach to improve Non-Intrusive speech quality assessment(NI-SQA) based on the residuals between impaired speech and enhanced speech. The difficulty in our task is particularly lack of information, for which the corresponding reference speech is absent. We generate an enhanced speech on the impaired speech to compensate for the absence of the reference audio, then pair the information of residuals with the impaired speech. Compared to feeding the impaired speech directly into the model, residuals could bring some extra helpful information from the contrast in enhancement. The human ear is sensitive to certain noises but different to deep learning model. Causing the Mean Opinion Score(MOS) the model predicted is not enough to fit our subjective sensitive well and causes deviation. These residuals have a close relationship to reference speech and then improve the ability of the deep learning models to predict MOS. During the training phase, experimental results demonstrate that paired with residuals can quickly obtain better evaluation indicators under the same conditions. Furthermore, our final results improved 31.3 percent and 14.1 percent, respectively, in PLCC and RMSE.

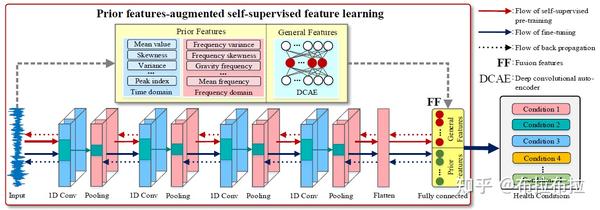

Despite the great progress of neural network-based (NN-based) machinery fault diagnosis methods, their robustness has been largely neglected, for they can be easily fooled through adding imperceptible perturbation to the input. For fault diagnosis problems, in this paper, we reformulate various adversarial attacks and intensively investigate them under untargeted and targeted conditions. Experimental results on six typical NN-based models show that accuracies of the models are greatly reduced by adding small perturbations. We further propose a simple, efficient and universal scheme to protect the victim models. This work provides an in-depth look at adversarial examples of machinery vibration signals for developing protection methods against adversarial attack and improving the robustness of NN-based models.

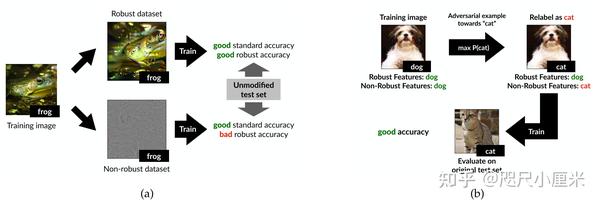

Deep neural networks (DNNs) have been shown to be vulnerable against adversarial examples (AEs) which are maliciously designed to fool target models. The normal examples (NEs) added with imperceptible adversarial perturbation, can be a security threat to DNNs. Although the existing AEs detection methods have achieved a high accuracy, they failed to exploit the information of the AEs detected. Thus, based on high-dimension perturbation extraction, we propose a model-free AEs detection method, the whole process of which is free from querying the victim model. Research shows that DNNs are sensitive to the high-dimension features. The adversarial perturbation hiding in the adversarial example belongs to the high-dimension feature which is highly predictive and non-robust. DNNs learn more details from high-dimension data than others. In our method, the perturbation extractor can extract the adversarial perturbation from AEs as high-dimension feature, then the trained AEs discriminator determines whether the input is an AE. Experimental results show that the proposed method can not only detect the adversarial examples with high accuracy, but also detect the specific category of the AEs. Meanwhile, the extracted perturbation can be used to recover the AEs to NEs.

项目

学术主页定制(webchat)

Django MySQL Bootstrap

Github地址:https://github.com/xaddwell/Django_Blog_Academy_Manage

负责前端构建,服务器架构,后端开发

- 经历过多次重构,注重性能优化与体验。

- 实现自定义域名访问个性化定制的个人主页

- 拥有日志系统以及消息的RSA算法加密

技能

Web基础

- 熟练掌握HTML5/CSS3,响应式布局和移动端开发

- 了解Vue,Django

数据处理

- 熟悉numpy,pandas,torch为基础的数据处理

- 熟悉爬虫相关操作,并有一定项目经历

机器学习

- 熟悉传统机器学习及深度学习算法

- 能够快速根据目标任务选定算法并完成编码

- 具有较好的debug能力

数据库

- MySQL

- GausssDB